Projects

Disentanglement Error library

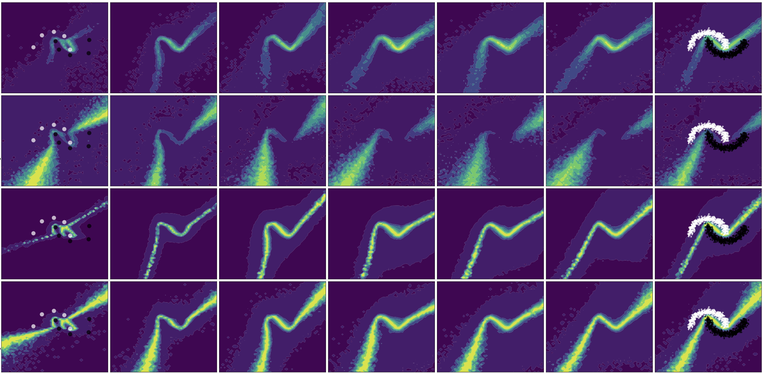

There are two reasons why Machine Learning models can make mistakes. Either the task is noisy, so it’s impossible to always be correct (aleatoric uncertainty), or the model has not learned everything yet (epistemic uncertainty).

We have had ways to estimate both of these for a while (for Neural Networks since 2017), but we did not have ways to evaluate whether these estimations were actually good.

The Disentanglement Error allows you to measure whether these two kinds of uncertainty are estimated independetly. When one of the uncertainties change, it should not affect the other. This is an important property of disentangled uncertainties.

Uncertainty in Machine Learning Symposium

The Uncertainty in Machine Learning symposium brings together active researchers studying how to identify when Machine Learning systems make mistakes. The event covered both fundamental research and applications in LLMs, Education, Healthcare, Computer Vision, as well as ethical considerations for the safe and responsible deployment of AI.

The event was recorded, and those recordings are still available. If you’re interested, feel free to send me a message and I will share the recordings with you.

For an overview of the speakers and topics, click the button below!